It was a Monday morning in late April 2026. I'd started work at 4am, same as most days, and by 9 o'clock I'd already worked through investor deck revisions, a patent prosecution update, and a draft NDA for a payments processor integration we've got coming. Next on the list was a logo.

Not a logo, the logo. The mark that's going to sit on top of a patent-pending AI governance platform, get stamped onto IBM-delivered proofs of concept, appear in investor decks, and eventually, if we do this right, become a small piece of brand infrastructure in the agentic AI category we're building for.

I wanted it done before lunch.

In any traditional professional-services world, that sentence is absurd. Designers don't take briefs at 9am and deliver by midday. They don't start at 4am. They don't work Sundays. And frankly, in today's hybrid-working world, you're lucky to get a human on a call before 10.

I don't mean that as a dig at designers, they're craftspeople, their work is important, and good design takes time. But I'm a founder running a Seed-stage AI company with more moving parts than I can reasonably juggle, and the gap between "I need to iterate on this now" and "I can get a designer's time in eleven days" is where a lot of small decisions either get made badly or don't get made at all.

So I opened Claude. And we got to work.

What actually happened

The first thing Claude produced was, by its own admission afterwards, rubbish. I'd uploaded a rough PowerPoint sketch I'd put together, three shapes that hinted at a capital G with a hidden "ai" inside, and asked it to help me refine the idea. It responded by drawing four variations that looked nothing like what I'd uploaded. Solid blocks. Floating bars. Misplaced dots. No G in sight.

I told it so, directly. "Mate, look at it again. It looks nothing like what I sent you."

Here's the thing I want to linger on, because it matters more than the design: Claude didn't get defensive. It didn't explain why its version was actually fine if I just looked at it a certain way. It looked again, admitted it had missed what I was seeing, and asked me to point at the parts I meant. I took a photo of my PowerPoint draft and annotated it. Claude rebuilt the mark properly in SVG from my annotations. We were off.

What followed was about ninety minutes of genuine collaborative design work.

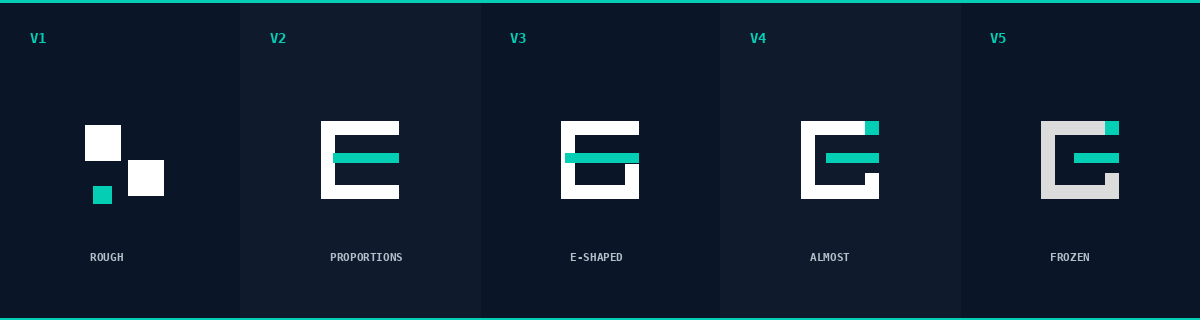

V1, Claude's first attempt. Nothing like a G. We threw it out.

V2, Claude rebuilt from my photograph, but the proportions were off. Crossbar protruding, rise misplaced. Close but not right.

V3, We refined the G construction properly. Added the typographic "rise" at the bottom-right that distinguishes a G from a C. Placed the crossbar for the hidden "a". But I could see it, the crossbar touched the G's left edge and the whole thing read as an E rather than a G with a hidden letter.

V4, I asked Claude to right-justify the crossbar. What I was reaching for was an old typographic trick: if the crossbar sits at the right edge and there's nothing closing it off, the viewer's eye completes the shape in negative space. The A's right vertical stroke would exist, but only as an invisible line the brain draws. On the first attempt it was better but not quite there.

V5, I asked Claude to align the crossbar's right edge with the outer edge of the rise. The crossbar weight needed a small bump up. And that was it. Frozen.

The G of GUARD is loud, it's the stamp of authority, the visible structural element that reads instantly. The "a" and "i" are hidden, the crossbar quietly indicates an a that mostly doesn't exist, and the dot indicates an i that isn't drawn. The viewer has to look for them. And if you don't see them at first, it doesn't matter, the G does the work.

That concept wasn't in the brief when I started. It emerged from the back-and-forth. Claude proposed structures; I pushed back; Claude admitted what didn't work; I had a conceptual insight about negative space; Claude rendered what I was describing faster than I could've explained it to anyone else.

What the process taught me about AI

Claude got things wrong, a lot. It called my PowerPoint draft an IBM rip-off at one point (it wasn't, I had to push back). It missed the hidden letterforms completely until I annotated them. Its first four design attempts were genuinely poor. If you're expecting AI to be a brilliant designer who gets it right first time, you will be disappointed.

What it was brilliant at was the part I'd actually pay for. Rapid rendering of variants so I could see options. Honest self-correction when I told it something was wrong. Pressure-testing my ideas by rendering them and showing me the failure modes ("this reads as an E, not a G"). Applying my taste calls instantly, I'd say "the bar needs a tad more weight" and within a minute I had three weight options to pick from.

It never once made me feel stupid for asking. And this is the bit that I think most writing about AI misses. I start work at 4 in the morning, 7 days a week. I make typos. I write "I before E except after C but not always" and get that wrong too. I ask the same question in three different ways before landing on what I actually meant. I change my mind halfway through an explanation. I'm a founder, not a designer, and a lot of the vocabulary I reach for when describing what I want is wrong.

Claude doesn't mock, sneer, or snigger at any of that. It just gets on with it. The psychological friction I used to carry into creative conversations, am I asking this the right way, is this a stupid question, am I wasting their time, is simply gone. What's left is the work.

What this means for aiGUARD

Here's the strange recursion that's not lost on me: the mark we designed is for a company whose entire product thesis is that AI outputs need to be governed by a mandatory control point between generation and delivery. A human in the loop. Every decision traceable, auditable, defensible.

And that's exactly the way we designed the logo.

- Every AI output was inspected before it got through.

- I rejected some, approved others, refined many.

- The AI never made a decision I didn't sign off on.

- When it made mistakes, I caught them.

- When I made mistakes (two instances where I claimed Claude had built something it hadn't), it pushed back honestly rather than playing along.

That's governance. And the logo that came out of it contains the concept in its form: ai is not seen but it is there. The AI does real work. The human does the structural, architectural, trust-bearing work. Neither replaces the other. Both are visible in the final output if you know where to look.

It took about ninety minutes, finished before lunch, and didn't require me to book a designer for eleven days' time.